Emerging Biomarkers for Early Cancer Detection: A 2025 Review of Innovations, Applications, and Clinical Translation

This article provides a comprehensive analysis of the rapidly evolving landscape of emerging biomarkers for early cancer detection, tailored for researchers, scientists, and drug development professionals.

Emerging Biomarkers for Early Cancer Detection: A 2025 Review of Innovations, Applications, and Clinical Translation

Abstract

This article provides a comprehensive analysis of the rapidly evolving landscape of emerging biomarkers for early cancer detection, tailored for researchers, scientists, and drug development professionals. It explores the foundational science behind novel biomarkers such as circulating tumor DNA (ctDNA), exosomes, and microRNAs. The scope extends to methodological advancements in liquid biopsy and multi-omics technologies, tackles critical troubleshooting and optimization challenges in clinical translation, and offers a comparative analysis of biomarker validation and regulatory pathways. By synthesizing current research and future trends, this resource aims to inform strategic decisions in biomarker discovery and development.

The New Frontier: Understanding the Science Behind Emerging Cancer Biomarkers

The Critical Role of Early Detection in Improving Cancer Survival Rates

Cancer continues to represent one of the most significant public health challenges globally, with 20 million new cases and 10 million cancer-associated deaths reported in 2022 alone, making it the second leading cause of mortality worldwide [1]. In this context, early cancer detection has emerged as a cornerstone strategy for improving patient outcomes. Research demonstrates that early detection leads to a median overall survival of 38 months, compared to just 14 months with delayed diagnosis [1]. Beyond survival benefits, early detection raises quality of life scores from 55 to 75 and lowers severe treatment-related side effects from 18% to 45% [1]. These statistics underscore the profound clinical impact of diagnosing cancer at its most treatable stages.

The biological basis for this survival advantage is multifaceted. Early-stage cancers are generally more susceptible to complete surgical resection and respond better to localized therapies before they have acquired the complex mutational burden and heterogeneity that characterize advanced disease [1]. Additionally, early detection enables therapeutic intervention before cancer cells have developed the capacity for metastatic spread, which remains the primary cause of cancer-related mortality [1]. As of 2025, approximately 18.6 million people in the United States were living with a history of cancer, a number projected to exceed 22 million by 2035 [2]. This growing population of cancer survivors highlights both the progress in detection and treatment and the continuing need for more effective early diagnostic strategies.

Biomarker Classification and Clinical Applications

Defining Biomarker Categories and Functions

Biomarkers are objectively measured characteristics that provide valuable insights into disease diagnosis, prognosis, and therapeutic response [3]. In oncology, biomarkers play indispensable roles throughout the cancer care continuum, from risk assessment and early detection to treatment selection and recurrence monitoring [4]. They can be broadly categorized based on their clinical applications:

- Risk Stratification Biomarkers: Identify patients at higher than usual risk of disease who require closer monitoring (e.g., smoking history for lung cancer) [3].

- Screening and Detection Biomarkers: Detect diseases before symptoms manifest, when therapy has greater likelihood of success (e.g., low-dose computed tomography for lung cancer screening) [3].

- Diagnostic Biomarkers: Confirm the presence of diseases (e.g., biopsies for cancer diagnosis) [3].

- Prognostic Biomarkers: Provide information about overall expected clinical outcomes regardless of therapy (e.g., sarcomatoid mesothelioma has poor outcomes regardless of treatment) [3].

- Predictive Biomarkers: Inform clinical outcomes based on treatment decisions in biomarker-defined patients (e.g., EGFR mutations in non-small cell lung cancer predict response to targeted therapies) [3].

Established and Emerging Cancer Biomarkers

The biomarker landscape encompasses both traditional protein markers and novel molecular signatures, each with distinct clinical applications and performance characteristics.

Table 1: Established Protein Biomarkers and Their Clinical Applications

| Biomarker | Cancer Type | Primary Applications | References |

|---|---|---|---|

| CEA | Colon, Liver | Screening, identifying recurrence, treatment monitoring | [1] |

| CA 15-3 | Breast | Treatment monitoring | [1] |

| CA 125 | Ovary | Prognosis, identifying recurrence, treatment monitoring | [1] |

| CA 19-9 | Pancreas, Colon | Treatment monitoring | [1] |

| AFP | Liver (HCC) | Identifying recurrence, treatment monitoring, diagnosis | [1] |

| PSA | Prostate | Screening, identifying recurrence, treatment monitoring | [1] |

| Her2 | Lung, Breast | Monitoring therapy | [1] |

While these traditional biomarkers have proven utility, particularly in monitoring treatment response and recurrence, they often lack the sensitivity and specificity required for early detection [4]. This limitation has driven the exploration of novel biomarker classes with superior diagnostic potential.

Table 2: Emerging Biomarker Classes for Early Cancer Detection

| Biomarker Class | Key Advantages | Current Challenges | Representative Examples |

|---|---|---|---|

| Circulating Tumor DNA (ctDNA) | Non-invasive monitoring, tumor-specific mutations, treatment response assessment | Low concentration and fragmentation, inter-patient variability | EGFR mutations, KRAS mutations [1] |

| Exosomes | Carry proteins, nucleic acids, lipids from parent cells, stable in circulation | Complexity of isolation and purification, standardization | Tumor-derived exosomes with miRNA signatures [1] |

| MicroRNAs (miRNAs) | Remarkable stability in blood, dysregulated in early carcinogenesis | Tissue-specific expression patterns, quantification standardization | miR-21, miR-155 in multiple cancer types [1] |

| Immunotherapy Biomarkers | Predict response to immune checkpoint inhibitors | Dynamic changes during treatment, tumor heterogeneity | PD-L1 expression, MSI-H, TMB [4] |

Advanced Detection Technologies and Methodologies

Liquid Biopsy and Multi-Analyte Approaches

Liquid biopsy represents a transformative approach in early cancer detection, enabling non-invasive analysis of tumor-derived components in blood and other bodily fluids [1]. This methodology encompasses several analytical techniques:

Circulating Tumor DNA (ctDNA) Analysis: ctDNA refers to tumor-derived fragmented DNA in circulation that carries tumor-specific genetic and epigenetic alterations. Key methodologies include:

- Next-Generation Sequencing (NGS): Allows for comprehensive mutation profiling using targeted panels or whole-genome approaches [4].

- Digital PCR: Provides absolute quantification of rare mutant alleles with high sensitivity [1].

- Methylation Analysis: Identifies cancer-specific DNA methylation patterns that are highly characteristic of malignancy [5].

Fragmentomics: This emerging field involves analyzing the size and structure of cell-free DNA fragments rather than the genes they encode. Tumor-derived DNA fragments exhibit distinct size distributions and fragmentation patterns compared to DNA from healthy cells, enabling highly sensitive cancer detection [5].

Multi-Omics Integration: The most advanced approaches combine multiple analyte classes to improve detection sensitivity and specificity. For example, integrating ctDNA mutation analysis with protein biomarker quantification and fragmentomic patterns has demonstrated enhanced performance for multi-cancer early detection [5].

Experimental Workflows for Biomarker Development

The development and validation of biomarkers for early detection follows a structured pathway from discovery to clinical application.

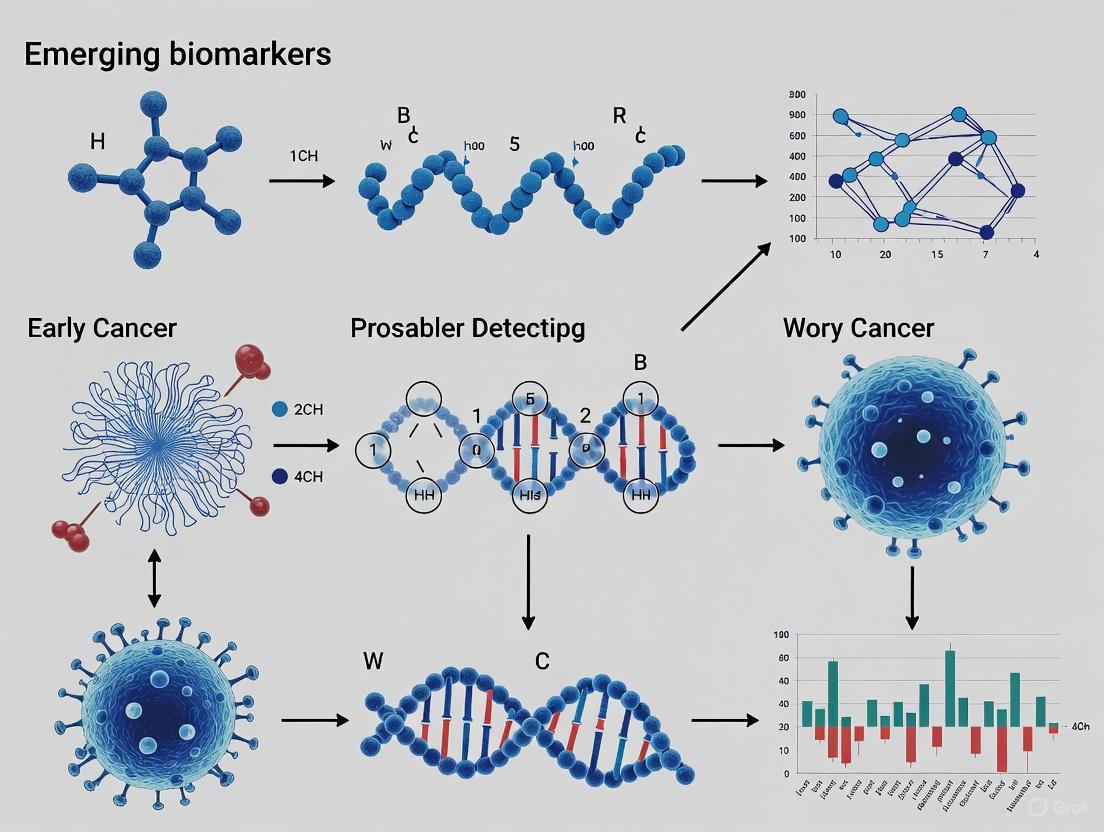

Diagram 1: Biomarker Development Pipeline. This workflow illustrates the structured pathway from initial discovery to clinical implementation, encompassing distinct phases of analytical and clinical validation [3] [6].

Essential Research Reagents and Platforms

The advancement of early detection biomarkers relies on sophisticated research tools and platforms that enable precise molecular characterization.

Table 3: Essential Research Reagent Solutions for Biomarker Development

| Technology Category | Specific Platforms/Assays | Primary Research Applications | Key Considerations |

|---|---|---|---|

| Gene Expression Analysis | RNA-Seq, Gene Expression Microarrays, TaqMan Gene Expression Assays | Biomarker discovery, transcriptional profiling, verification | Concordance across platforms, dynamic range, sensitivity [7] |

| Next-Generation Sequencing | Whole Exome Sequencing (WES), Whole Genome Sequencing (WGS), Targeted Panels | Comprehensive mutation profiling, fusion gene detection, biomarker discovery | Coverage depth, variant calling accuracy, cost [4] |

| Digital PCR | QuantStudio 3D Digital PCR System | Rare mutation detection, absolute quantification, validation studies | Sensitivity, throughput, multiplexing capability [7] |

| Immunoassay Platforms | Immunohistochemistry (IHC), Multiplex Immunoassays | Protein biomarker validation, immune cell profiling, PD-L1 scoring | Antibody specificity, quantification methods, standardization [4] |

| Single-Cell Analysis | Single-Cell RNA Sequencing, Cytometry by Time-of-Flight (CyTOF) | Tumor heterogeneity, tumor microenvironment characterization, rare cell detection | Cell viability, marker panels, computational analysis [3] |

Technological Innovations and Research Frontiers

Artificial Intelligence and Multi-Omics Integration

The integration of artificial intelligence (AI) with multi-omics data represents a paradigm shift in early cancer detection. AI algorithms can identify complex patterns across genomic, transcriptomic, proteomic, and metabolomic datasets that are imperceptible to conventional analysis [8]. For example, machine learning approaches applied to microbiome data have enabled the identification of microbial signatures associated with colorectal cancer across multiple cohorts [9]. Similarly, AI-powered analysis of CT scans can predict lung cancer risk with higher accuracy than traditional radiological assessment [5].

Multi-omics integration combines data from various molecular levels to create comprehensive signatures of early malignancy. This approach has demonstrated particular promise in detecting cancers that currently lack effective screening methods, such as pancreatic and ovarian cancers [8]. By combining ctDNA mutations, protein biomarkers, and fragmentomic patterns, these multi-modal assays can achieve sensitivities exceeding 90% for certain cancer types while maintaining high specificity [5].

Microbial Biomarkers and the Human Microbiome

Emerging evidence indicates that the human microbiome, particularly gut and oral microbiota, plays a significant role in carcinogenesis and offers novel biomarkers for early detection [9]. Computational frameworks like xMarkerFinder enable the identification and validation of microbial biomarkers from cross-cohort datasets through a four-stage process: differential signature identification, model construction, model validation, and biomarker interpretation [9].

Key advances in this field include:

- Multi-kingdom microbiota analyses that examine interactions between bacteria, fungi, and viruses in carcinogenesis [9].

- Metagenomic analysis of fecal samples to identify microbial single nucleotide variants as superior biomarkers for early detection of colorectal cancer [9].

- Oral microbiome signatures associated with oral squamous cell carcinoma identified using random forest models [9].

These microbial biomarkers offer particular promise for gastrointestinal cancers but are also being investigated for cancers at more distant sites through their influence on inflammation, immune function, and metabolism.

Validation Frameworks and Clinical Translation

Statistical Considerations and Validation Standards

Robust biomarker validation requires careful statistical planning and consideration of potential biases throughout the development process. Key statistical metrics for evaluating biomarker performance include:

- Sensitivity: The proportion of true positive cases that test positive [3]

- Specificity: The proportion of true negative controls that test negative [3]

- Positive Predictive Value (PPV): Proportion of test-positive patients who actually have the disease [3]

- Negative Predictive Value (NPV): Proportion of test-negative patients who truly do not have the disease [3]

- Area Under the Curve (AUC): Overall measure of diagnostic accuracy across all possible thresholds [3]

The validation process must address several potential sources of bias, including patient selection bias, specimen collection variability, and analytical batch effects [3]. Randomized specimen assignment and blinding of personnel involved in biomarker data generation to clinical outcomes are essential methods for minimizing these biases [3].

Table 4: Biomarker Validation Stages and Key Considerations

| Validation Stage | Primary Objectives | Sample Considerations | Regulatory Status |

|---|---|---|---|

| Research Use Only (RUO) | Demonstrate reproducible performance in independent datasets | Archived specimens with known outcomes | Not for diagnostic use [6] |

| Retrospective Clinical Validation | Assess performance in purpose-designed testing parameters | Representative clinical study sample cohort | Investigational [6] |

| Prospective Clinical Validation | Evaluate performance in intended-use population | Prospective collection from target population | Investigational Device Exemption (IDE) [6] |

| Clinical Utility | Demonstrate improvement in clinically meaningful endpoints | Large, diverse cohorts in real-world settings | Premarket Approval (PMA) [6] |

Addressing Translational Challenges

The path from biomarker discovery to clinical implementation faces several significant challenges. Low concentration and fragmentation of ctDNA, complexity of exosome isolation, inter-patient variability in miRNA expression, and absence of clinical standardization present substantial technical hurdles [1]. Additionally, equitable access to emerging technologies remains a concern, as patients in low-income countries are 50% less likely to be diagnosed with cancer than patients in high-income countries due to limited accessibility to diagnostic procedures [1].

Potential strategies to address these challenges include:

- Development of pre-analytical standards for specimen collection and processing

- Creation of reference materials for assay calibration and quality control

- Implementation of computational methods to correct for batch effects and technical variability [9]

- Design of inclusive validation studies that encompass diverse patient populations

- Establishment of cost-effective testing platforms suitable for low-resource settings

The field of early cancer detection stands at a transformative juncture, with emerging biomarker technologies offering unprecedented opportunities to diagnose cancer at its most treatable stages. Circulating tumor DNA, exosomes, microRNAs, and immunotherapy biomarkers represent promising avenues for non-invasive detection, while advanced analytical approaches like fragmentomics and multi-omics integration are enhancing the sensitivity and specificity of these assays.

The successful translation of these technologies into clinical practice will require multidisciplinary collaboration among researchers, clinicians, diagnostic developers, and regulatory agencies. Future research should prioritize overcoming current technical challenges, establishing standardized protocols, and demonstrating clinical utility through well-designed validation studies. Additionally, ensuring equitable access to these advances will be crucial for realizing their full potential to reduce the global burden of cancer.

As these technologies mature, they hold the promise of fundamentally reshaping cancer care through earlier intervention, personalized risk assessment, and ultimately, significant improvements in cancer survival rates and quality of life for patients worldwide.

Early cancer detection is a pivotal factor in improving patient survival rates and overall outcomes. Statistics reveal that early detection can lead to a median overall survival of 38 months, a significant increase compared to the 14 months observed with delayed diagnosis [1]. Furthermore, it can enhance quality of life scores from 55 to 75 and reduce severe treatment-related side effects from 18% to 45% [1]. Despite these benefits, approximately 50% of cancer cases are still diagnosed at advanced stages, leading to poor prognoses and high mortality, a challenge particularly acute in low-resource settings [1]. The global cancer burden is immense, with 20 million new cases and 10 million cancer-associated deaths reported in 2022 alone, making cancer the second leading cause of mortality worldwide [1].

This context underscores the critical need for advanced diagnostic tools. The field is undergoing a significant transformation, moving beyond traditional tissue biopsies and single-analyte tests towards a new paradigm defined by minimally invasive liquid biopsies and multi-analyte profiling [10] [11]. This next generation of biomarkers, including circulating tumor DNA (ctDNA), exosomes, and microRNAs (miRNAs), offers a powerful, non-invasive approach to understanding tumor dynamics [1] [11]. These biomarkers, accessible from simple blood draws or other body fluids, enable earlier detection, real-time monitoring of treatment response, and the tracking of minimal residual disease, thereby redefining the standards of precision oncology [11]. This whitepaper provides an in-depth technical guide to these core biomarkers, framing them within the broader thesis of their collective role in advancing early cancer detection research.

Biomarker Deep Dive: Characteristics, Technologies, and Workflows

Circulating Tumor DNA (ctDNA)

ctDNA refers to short fragments of tumor-derived DNA that are shed into the bloodstream and other body fluids through processes such as apoptosis, necrosis, and active secretion from tumor cells [10]. It is a subset of cell-free DNA (cfDNA) and typically constitutes a small fraction, approximately 0.1% to 1.0%, of the total cfDNA in cancer patients [10]. A key characteristic of ctDNA is its short half-life, often as brief as 1-2.5 hours, which allows it to provide a real-time snapshot of the tumor's molecular landscape at a given point in time [10]. This dynamism makes it an excellent biomarker for monitoring disease progression and treatment response.

The primary molecular hallmarks detected in ctDNA analysis include:

- Somatic Mutations: Such as point mutations in genes like EGFR, KRAS, and TP53 [12] [10].

- Gene Fusions/Rearrangements: Involving oncogenes like ALK and ROS1 [12].

- DNA Methylation Changes: Aberrant hypermethylation or hypomethylation of gene promoter regions, which often precedes tumor formation and offers strong early diagnostic signals [12] [10].

Technological advancements have been crucial for harnessing the potential of ctDNA. Key enabling technologies include:

- Next-Generation Sequencing (NGS): Allows for high-throughput, sensitive characterization of rare ctDNA mutations across multiple genes simultaneously [1] [11].

- Digital PCR (dPCR) and BEAMing: Provide ultra-sensitive, quantitative detection of known, low-frequency mutations [10].

- Microfluidic Devices: Facilitate the isolation and analysis of ctDNA with high efficiency [11].

Figure 1: ctDNA Biogenesis and Analysis Workflow. The diagram illustrates the pathway from tumor DNA release into the bloodstream to clinical application.

Exosomes and Extracellular Vesicles

Exosomes are a class of extracellular vesicles (EVs), typically 30-150 nm in diameter, that are released by virtually all cells, including cancer cells [1] [10]. They play a crucial role in intercellular communication and are loaded with a diverse molecular cargo derived from their parent cell. This cargo includes:

- Nucleic Acids: DNA, miRNAs, other non-coding RNAs, and mRNAs.

- Proteins: Tetraspanins (CD63, CD81, CD9), heat shock proteins, and tumor-specific antigens.

- Lipids.

For cancer diagnostics, exosomes are valuable because they protect their internal cargo from degradation, providing a stable source of tumor-specific information [1] [11]. Their presence in readily accessible body fluids like blood, urine, and saliva makes them ideal for non-invasive liquid biopsies [13].

The isolation of exosomes remains a technical challenge, and the choice of method can significantly impact downstream analysis. Common techniques include:

- Ultracentrifugation: The traditional gold standard, though it can be time-consuming and may co-precipitate contaminants.

- Size-Based Isolation Techniques: Such as ultrafiltration and size-exclusion chromatography.

- Immunoaffinity Capture: Using antibodies against exosomal surface markers (e.g., CD63, CD81) for highly specific isolation.

- Polymer-Based Precipitation: A simple but less specific method.

- Microfluidic Devices: Emerging platforms that offer rapid, automated isolation with high purity and yield [11].

Once isolated, the exosomal cargo can be characterized using a variety of omics technologies, including RNA-Seq for transcriptomic profiling, mass spectrometry for proteomic analysis, and NGS for genetic and epigenetic characterization [11].

MicroRNAs (miRNAs)

MicroRNAs (miRNAs) are small, single-stranded, non-coding RNA molecules approximately 19-25 nucleotides in length that function as key post-transcriptional regulators of gene expression [1] [13]. They can stably circulate in body fluids, either bound to proteins like Argonaute 2 or encapsulated within extracellular vesicles such as exosomes, which protect them from RNase degradation [13]. This stability makes them exceptionally suitable for clinical assay development.

The relevance of miRNAs in oncology stems from their role as oncogenic drivers (oncomiRs) or tumor suppressors. Cancer cells often show differential miRNA expression patterns—either upregulation or downregulation—compared to normal cells, which can be exploited for diagnostic and prognostic purposes [12]. For instance, specific miRNA signatures can distinguish malignant from benign conditions with high accuracy.

Research into body fluid miRNAs for gastrointestinal tract (GIT) tumors has been particularly active. A bibliometric analysis of 775 publications from 2010-2025 showed that China, Japan, and the United States were the top three countries contributing to this field, with research hotspots shifting towards "liquid biopsy", "extracellular vesicles", and "machine learning" in recent years [13]. The analysis concluded that the prospective trends involve further exploration of miRNAs encapsulated in extracellular vesicles, which will likely advance early screening and personalized treatment [13].

Figure 2: MicroRNA Workflow from Biogenesis to Application. The pathway details the process from miRNA generation within a tumor cell to its analysis and clinical use.

Comparative Analysis of Key Biomarkers

Table 1: Comparative Analysis of ctDNA, Exosome, and miRNA Biomarkers

| Characteristic | Circulating Tumor DNA (ctDNA) | Exosomes | MicroRNAs (miRNAs) |

|---|---|---|---|

| Biological Origin | Apoptosis, necrosis of tumor cells [10] | Active secretion from cells (multivesicular bodies) [1] | Transcription from genome; often packaged in exosomes [13] |

| Primary Molecular Content | Tumor-specific mutations, methylation patterns [12] [10] | Proteins, lipids, DNA, miRNAs, mRNAs [1] [11] | Mature miRNA sequences (~22 nt) [13] |

| Approximate Half-Life | Short (~1-2.5 hours) [10] | Believed to be relatively stable | Highly stable in circulation (vesicle/protein-bound) [13] |

| Key Isolation Methods | cfDNA extraction kits from plasma | Ultracentrifugation, size-exclusion, immunoaffinity [1] | RNA extraction; specific capture from plasma/serum |

| Primary Analysis Technologies | NGS, dPCR, BEAMing [10] [11] | NTA, Western Blot, RNA-Seq, Mass Spec [11] | RT-qPCR, miRNA-Seq, microarrays [13] |

| Key Strengths | Direct genomic information; real-time dynamics; guides targeted therapy [12] [11] | Rich, multi-omic cargo; protects contents; reflects cell of origin [1] [11] | High stability; differential expression patterns; early diagnostic potential [12] [13] |

| Major Challenges | Low fractional abundance; high fragmentation; requires deep sequencing [1] | Complex isolation and standardization; heterogeneous population [1] | Inter-patient variability; need for normalized panels; complex biology [1] |

Integrated Experimental Protocols

Integrated Liquid Biopsy Workflow for Biomarker Analysis

This protocol outlines a comprehensive methodology for the simultaneous analysis of ctDNA, exosomes, and miRNAs from a single blood sample, enabling a multi-analyte liquid biopsy approach.

I. Sample Collection and Pre-processing

- Blood Draw: Collect 10-20 mL of peripheral blood into EDTA or Streck Cell-Free DNA BCT blood collection tubes to prevent nucleased degradation and preserve ctDNA.

- Plasma Separation: Process within 2-4 hours of draw. Centrifuge at 1,600-2,000 x g for 10-20 minutes at 4°C to separate plasma from cellular components.

- Second Centrifugation: Transfer the supernatant (plasma) to a new tube and perform a second, high-speed centrifugation at 16,000 x g for 10-15 minutes at 4°C to remove any remaining cells, platelets, and debris. Aliquot the clarified plasma for downstream applications.

II. Concurrent Biomarker Isolation

- Exosome Isolation (from 2-4 mL plasma):

- Method: Use a commercial exosome isolation kit based on polymer precipitation or size-exclusion chromatography for balanced yield and purity.

- Procedure: Mix plasma with precipitation solution, incubate overnight at 4°C, then centrifuge at >10,000 x g to pellet exosomes.

- Resuspension: Resuspend the exosome pellet in a small volume of PBS or nuclease-free water.

- Characterization (Optional): Confirm isolation and size distribution using Nanoparticle Tracking Analysis (NTA) and protein markers (CD63, CD81) via Western Blot.

- Co-isolation of ctDNA and Cell-Free miRNA (from 3-5 mL plasma):

- Use a commercial cfDNA/ccfRNA extraction kit designed to co-purify both DNA and RNA from the same plasma sample.

- Bind nucleic acids to a silica membrane column, wash, and elute in a small volume. The eluate contains both ctDNA (if present) and circulating miRNAs (both vesicular and free).

III. Downstream Molecular Analysis

- ctDNA Analysis:

- Quantification: Use a fluorometer (e.g., Qubit) to measure cfDNA concentration.

- Quality Control: Analyze fragment size distribution using a Bioanalyzer or TapeStation; expect a peak at ~167 bp.

- Mutation Detection:

- For known mutations: Use digital PCR (dPCR) for ultra-sensitive, absolute quantification of specific mutations (e.g., EGFR T790M, KRAS G12C).

- For unknown/unbiased profiling: Prepare an NGS library. Due to low ctDNA abundance, use ultra-deep sequencing (e.g., >10,000x coverage) with unique molecular identifiers (UMIs) to correct for errors.

- Exosomal Cargo Analysis:

- RNA Extraction: Isolve total RNA from the isolated exosomes using a miniaturized RNA extraction kit.

- miRNA Profiling: For the exosomal RNA and the cell-free RNA from Step II.2:

- RT-qPCR: For targeted analysis of a specific miRNA panel (e.g., miR-21, miR-155). Use specific stem-loop primers for reverse transcription for high specificity.

- miRNA-Seq: For discovery-based profiling. Prepare libraries using a dedicated small RNA library prep kit to capture the 15-30 nt miRNA fraction. Sequence on an NGS platform.

- Integrated Data Analysis:

- Bioinformatic Processing: Align NGS reads to the reference genome (ctDNA) or miRBase (miRNA). For ctDNA, call somatic variants and calculate variant allele frequency (VAF). For miRNA, normalize read counts and perform differential expression analysis.

- Data Integration: Combine the mutation status from ctDNA, the miRNA expression signature from exosomal/cell-free RNA, and potentially exosomal protein data to generate a multi-modal diagnostic score.

Research Reagent Solutions

Table 2: Essential Research Reagents and Kits for Liquid Biopsy

| Item | Function/Description | Example Use Case |

|---|---|---|

| Cell-Free DNA BCT Tubes | Blood collection tubes with preservatives that stabilize nucleated blood cells and prevent ctDNA degradation. | Maintains integrity of ctDNA during sample transport and storage prior to plasma processing [10]. |

| cfDNA/cfRNA Extraction Kit | Silica-membrane or magnetic bead-based kits for simultaneous isolation of cell-free DNA and RNA from plasma. | Co-purification of ctDNA and circulating miRNAs (including exosomal miRNAs) from a single plasma aliquot. |

| Exosome Isolation/Precipitation Kit | Polymer-based solutions that alter the solubility of exosomes, enabling precipitation via centrifugation. | Rapid isolation of exosomes from plasma/serum/urine for downstream RNA or protein analysis [1]. |

| Digital PCR System | Platform that partitions a single PCR reaction into thousands of nanoreactions for absolute quantification of nucleic acids. | Sensitive detection and quantification of low-frequency mutations (e.g., <0.1% VAF) in ctDNA [10] [11]. |

| Small RNA Library Prep Kit | Reagents for constructing sequencing libraries specifically from the small RNA fraction (<200 nt). | Preparation of miRNA-Seq libraries from exosomal or total plasma RNA to profile miRNA expression [13] [11]. |

| Next-Generation Sequencer | High-throughput platform (e.g., Illumina, Ion Torrent) for parallel sequencing of millions of DNA fragments. | Comprehensive profiling of ctDNA mutations/methylation and exosomal RNA cargo [1] [11]. |

| Bioinformatic Analysis Pipelines | Software for aligning sequences, calling variants, and performing differential expression analysis. | Interpreting raw NGS data to generate actionable biological insights (mutational landscapes, miRNA signatures) [13]. |

The convergence of ctDNA, exosomes, and miRNAs represents a powerful, multi-faceted toolkit that is defining the next generation of cancer diagnostics. While each biomarker has unique strengths and technical challenges, their integration offers a more comprehensive view of the tumor's molecular state than any single analyte could provide. The translational of these biomarkers from research tools to routine clinical practice hinges on overcoming key challenges, including the standardization of isolation protocols, validation in large-scale multi-center trials, and improving accessibility in low-resource settings [1]. As technological innovations in sequencing, microfluidics, and artificial intelligence continue to mature, the synergistic application of these liquid biopsy biomarkers holds the definitive promise to revolutionize early cancer detection, usher in an era of true precision medicine, and ultimately, significantly improve patient survival and quality of life.

Liquid biopsy represents a transformative approach in oncology, enabling the detection and management of cancer through the analysis of tumor-derived components in bodily fluids. This minimally invasive technique stands in contrast to traditional tissue biopsies, addressing critical limitations such as invasiveness, inability to capture tumor heterogeneity, and challenges in longitudinal monitoring [14] [10]. The fundamental principle underlying liquid biopsy involves the "liquid" phase of tumors, where cancer cells release various biological materials—including circulating tumor cells (CTCs), circulating tumor DNA (ctDNA), extracellular vesicles (EVs), and cell-free RNA (cfRNA)—into the circulation and other body fluids [14] [15]. These analytes serve as rich sources of molecular information about the tumor's genetic makeup, mutational status, and dynamic changes over time. For researchers and drug development professionals, liquid biopsies offer unprecedented opportunities to study tumor evolution, monitor therapeutic resistance, and develop novel biomarkers for early detection within the broader context of advancing precision oncology [10] [16].

Key Biomarkers in Liquid Biopsy: Technical Specifications and Research Applications

Liquid biopsy analysis encompasses multiple biomarker classes, each with distinct characteristics, isolation challenges, and research applications. The table below summarizes the technical specifications of major liquid biopsy biomarkers relevant to cancer screening and monitoring.

Table 1: Technical Specifications of Major Liquid Biopsy Biomarkers

| Biomarker | Origin & Composition | Half-Life | Primary Isolation Methods | Key Research Applications |

|---|---|---|---|---|

| Circulating Tumor Cells (CTCs) | Cells shed from primary/metastatic tumors [10] | 1-2.5 hours [10] | Immunomagnetic separation (CellSearch), microfluidic devices, filtration [10] [15] | Studying metastasis, EMT, drug resistance mechanisms [15] |

| Circulating Tumor DNA (ctDNA) | DNA fragments released from apoptotic/necrotic tumor cells [10] | ~2 hours [17] | BEAMing, ddPCR, NGS-based panels [10] [17] | Tracking tumor heterogeneity, monitoring MRD, identifying actionable mutations [16] |

| Tumor Extracellular Vesicles (EVs) | Membrane-bound vesicles (50-1000 nm) containing proteins, nucleic acids [14] | Not specified | Ultracentrifugation, nanomembrane ultrafiltration, precipitation [14] | Investigating intercellular communication, biomarker discovery [14] |

| Cell-Free RNA (cfRNA) | RNA released from tumor/microbiome sources [18] | Varies by RNA type | RNA stabilization, extraction, modification analysis [18] | Early detection, studying tumor microenvironment interactions [18] |

| Tumor-Educated Platelets (TEPs) | Platelets that have ingested tumor-derived biomaterial [14] | 8-10 days | Antibody-based isolation, RNA sequencing [14] | Exploring cancer-induced platelet education, metastasis studies [14] |

Circulating Tumor DNA (ctDNA) and Advanced Detection Methodologies

ctDNA has emerged as a particularly promising biomarker due to its short half-life (~2 hours) and ability to provide real-time information on tumor genetics [17]. ctDNA typically constitutes only 0.1-1.0% of total cell-free DNA (cfDNA) in cancer patients, presenting significant detection challenges, especially in early-stage disease [10] [17]. Next-generation sequencing (NGS) technologies have dramatically improved ctDNA detection sensitivity, with newer assays like Northstar Select demonstrating a limit of detection (LOD) of 0.15% variant allele frequency (VAF) for single nucleotide variants (SNVs) and indels [19]. This enhanced sensitivity is crucial for detecting minimal residual disease (MRD) and early-stage cancers where tumor DNA shedding is minimal. The clinical utility of ctDNA has been validated through FDA-approved tests such as Guardant360 CDx and FoundationOne Liquid CDx, which are now integrated into clinical practice as companion diagnostics for various targeted therapies [20] [21].

Emerging RNA-Based Approaches for Early Detection

While DNA-based approaches dominate current liquid biopsy applications, RNA-based methodologies show exceptional promise for early cancer detection. Researchers at the University of Chicago developed a novel approach analyzing RNA modifications in cell-free RNA (cfRNA) rather than relying on DNA mutations [18]. This method demonstrated 95% accuracy in detecting early-stage colorectal cancer, significantly outperforming existing non-invasive tests whose accuracy drops below 50% for early stages [18]. The approach uniquely leverages modifications on microbial RNA from the gut microbiome, which reflects changes in the tumor microenvironment. As microbiome cells turn over more rapidly than human cells, they release more detectable signals earlier in tumor development, providing a sensitive indicator of nascent malignancies [18].

Experimental Protocols for Liquid Biopsy Analysis

Comprehensive Protocol for ctDNA Analysis Using NGS

The following detailed protocol outlines the complete workflow for ctDNA analysis using next-generation sequencing, optimized for research applications in cancer detection and monitoring.

Table 2: Essential Research Reagents for ctDNA NGS Analysis

| Reagent Category | Specific Examples | Research Function |

|---|---|---|

| Blood Collection Tubes | Cell-free DNA BCT tubes (Streck), PAXgene Blood cDNA tubes | Preserves cfDNA integrity by preventing leukocyte lysis and genomic DNA contamination [19] |

| cfDNA Extraction Kits | QIAamp Circulating Nucleic Acid Kit (Qiagen), MagMax Cell-Free DNA Isolation Kit | Isulates high-quality cfDNA with minimal fragmentation from plasma samples [19] |

| Library Preparation | KAPA HyperPrep Kit, Illumina TruSeq DNA PCR-Free Library Preparation Kit | Prepares sequencing libraries with unique molecular identifiers to reduce amplification bias [19] |

| Hybridization Capture | IDT xGen Lockdown Probes, Twist Human Core Exome | Enriches for target genomic regions of interest; custom panels available [19] |

| Sequencing Reagents | Illumina NovaSeq 6000 S4 Flow Cell, NextSeq 1000/2000 P3 reagents | Provides high-throughput sequencing capacity for low-VAF variant detection [19] |

| Bioinformatics Tools | BWA-MEM, GATK, custom variant callers (e.g., Northstar Select pipeline) | Aligns sequences, identifies true variants, filters artifacts including CHIP [19] |

Step 1: Sample Collection and Processing

- Collect 10-20 mL peripheral blood into cell-stabilizing collection tubes (e.g., Cell-free DNA BCT Streck tubes)

- Process samples within 6 hours of collection: centrifuge at 1600×g for 20 minutes at 4°C to separate plasma

- Transfer supernatant to fresh tubes and perform a second centrifugation at 16,000×g for 10 minutes to remove residual cells

- Aliquot plasma and store at -80°C if not processing immediately [19]

Step 2: Cell-Free DNA Extraction

- Extract cfDNA from 2-10 mL plasma using silica membrane or magnetic bead-based kits

- Quantify cfDNA using fluorometric methods (e.g., Qubit dsDNA HS Assay)

- Assess cfDNA quality via capillary electrophoresis (e.g., Bioanalyzer High Sensitivity DNA kit)

- Expected yield: 5-50 ng cfDNA from 10 mL plasma, depending on tumor burden [19]

Step 3: Library Preparation and Target Enrichment

- Convert 10-100 ng cfDNA into sequencing libraries using kits that incorporate unique molecular identifiers (UMIs)

- Amplify libraries with 8-12 PCR cycles, then assess quality and quantity

- Perform hybrid capture using predesigned panels (e.g., 84-gene panel for Northstar Select) [19]

- Use biotinylated probes for target enrichment, followed by magnetic bead purification

Step 4: Next-Generation Sequencing

- Pool barcoded libraries in equimolar ratios

- Sequence on Illumina platforms (NovaSeq 6000) to achieve minimum 10,000x raw coverage

- Target >99% of bases covered at 500x after deduplication for reliable detection of variants at 0.15% VAF [19]

Step 5: Bioinformatic Analysis

- Demultiplex raw sequencing data and align to reference genome (hg38) using BWA-MEM or similar aligner

- Process UMIs to generate consensus sequences and remove PCR duplicates

- Apply variant calling algorithms optimized for low-VAF detection

- Filter out variants associated with clonal hematopoiesis of indeterminate potential (CHIP) using population databases [19]

- Annotate variants using COSMIC, gnomAD, and clinical databases (OncoKB)

Advanced Protocol for RNA Modification Analysis in Early Cancer Detection

This protocol details the innovative approach for detecting early-stage cancer through RNA modification analysis, demonstrating significantly improved sensitivity over DNA-based methods.

Step 1: Sample Collection and RNA Stabilization

- Collect blood in PAXgene Blood RNA tubes for immediate RNA stabilization

- Process within 2 hours: centrifuge at 1900×g for 10 minutes

- Isolate plasma followed by additional centrifugation at 16,000×g for 10 minutes

- Add RNA stabilization reagents to prevent degradation [18]

Step 2: Cell-Free RNA Extraction and Quality Control

- Extract total cfRNA using phenol-chloroform or silica membrane methods

- Treat with DNase I to remove contaminating DNA

- Quantify using RNA-specific fluorometric assays (e.g., Qubit RNA HS Assay)

- Assess RNA integrity via Bioanalyzer RNA Integrity Number (RIN >7.0 recommended) [18]

Step 3: RNA Modification Analysis

- Convert RNA to cDNA using reverse transcriptase with specific primers

- Perform quantitative analysis of RNA modifications (e.g., m6A, m5C) via mass spectrometry or antibody-based methods

- Analyze microbiome-derived RNA modifications using custom bioinformatic pipelines

- Normalize modification levels rather than RNA abundance for improved stability [18]

Step 4: Statistical Analysis and Classification

- Apply machine learning algorithms to distinguish cancer vs. healthy samples

- Train classifiers on modification patterns from microbial and human RNA

- Validate model performance using independent sample sets

- Achieve >95% accuracy for early-stage colorectal cancer detection [18]

Analytical Validation and Performance Metrics of Liquid Biopsy Assays

Robust validation is essential for implementing liquid biopsy assays in research and clinical contexts. The table below compares the performance characteristics of current liquid biopsy technologies, highlighting advancements in detection sensitivity.

Table 3: Performance Comparison of Liquid Biopsy Detection Methods

| Assay/Method | Analytical Sensitivity | Variant Types Detected | Key Advantages | Recognized Limitations |

|---|---|---|---|---|

| Northstar Select | LOD: 0.15% VAF (SNV/Indels), 2.11 copies (CNV gain) [19] | SNV, Indel, CNV, Fusions, MSI [19] | 51% more pathogenic SNV/indels vs. comparators; 109% more CNVs [19] | Limited gene panel (84 genes) vs. comprehensive assays [19] |

| FoundationOne Liquid CDx | FDA-approved for multiple companion diagnostics [20] | SNV, Indel, CNV, Fusions, MSI, TMB [21] | Broad 300+ gene coverage; FDA-approved companion diagnostic status [21] | Lower sensitivity for CNVs in low tumor fraction samples [19] |

| Guardant360 CDx | FDA-approved for EGFR mutations in NSCLC [21] | SNV, Indel, CNV, Fusions [21] | FDA-approved; focused on clinically actionable variants [21] | Lower sensitivity below 0.5% VAF compared to newer assays [19] |

| RNA Modification Assay | 95% accuracy for early-stage CRC [18] | RNA modifications, microbiome changes | Exceptional early-stage sensitivity; microbial RNA provides additional signal [18] | Research-use only; not yet FDA-approved [18] |

| CellSearch (CTCs) | FDA-cleared for prognostic use in breast cancer [10] | CTC enumeration, phenotypic characterization | Only FDA-cleared CTC platform; prognostic validation [10] | Limited to EpCAM-positive cells; may miss mesenchymal CTCs [15] |

Recent advances in assay technology have substantially improved detection capabilities. The Northstar Select assay demonstrates a 95% limit of detection at 0.15% variant allele frequency for SNVs and indels, representing a significant improvement over earlier commercial assays [19]. In head-to-head comparisons, this enhanced sensitivity resulted in 51% more pathogenic SNVs/indels and 109% more copy number variants detected compared to existing commercial CGP liquid biopsy assays [19]. This improved performance is particularly valuable for low-shedding tumors and early-stage disease detection, where analyte concentration is minimal. For copy number variants, the assay achieves detection down to 2.11 copies for amplifications and 1.80 copies for losses, addressing a traditional weakness in liquid biopsy analysis [19].

Liquid biopsy technologies have fundamentally transformed the landscape of cancer detection and monitoring, providing researchers with powerful tools to study tumor dynamics non-invasively. The field continues to evolve rapidly, with ongoing research addressing current limitations while expanding applications. Key future directions include the development of multi-analyte approaches that combine DNA, RNA, and protein markers to improve sensitivity and specificity, especially for early-stage disease detection [15] [18]. Standardization of pre-analytical variables, analytical protocols, and bioinformatic pipelines remains a priority to ensure reproducibility across research laboratories [19]. Additionally, the integration of artificial intelligence and machine learning for pattern recognition in complex liquid biopsy data holds promise for further enhancing diagnostic accuracy [18]. As these technologies mature, liquid biopsies are poised to become increasingly integral to cancer research, drug development, and ultimately, clinical practice, potentially enabling a future where routine cancer screening is as simple as a blood test [22].

The escalating global cancer burden, with an estimated 20 million new cases and 9.7 million deaths in 2022 alone, underscores the critical need for transformative approaches in oncology [23]. Early detection remains a pivotal challenge, as timely intervention dramatically improves survival rates and treatment outcomes [24]. In this context, biomarkers—objective biological measures indicating normal or pathological processes—have become indispensable tools for decoding cancer complexity [23]. The evolution of high-throughput technologies has catalyzed a paradigm shift from single-analyte biomarkers to integrated multi-omics profiling, enabling unprecedented resolution in understanding tumor biology [25]. This whitepaper provides a comprehensive technical analysis of the four core biomarker classes—genomic, epigenetic, transcriptomic, and proteomic—framed within their application to early cancer detection research. We detail the fundamental principles, profiling methodologies, clinical applications, and experimental protocols for each biomarker class, with particular emphasis on their integration through multi-omics strategies and artificial intelligence (AI) to advance precision oncology.

Biomarker Classes: Technical Foundations and Methodologies

Genomic Biomarkers

Genomic biomarkers encompass alterations at the DNA sequence level, including mutations, copy number variations (CNVs), single nucleotide polymorphisms (SNPs), and chromosomal rearrangements [25]. These alterations drive oncogenesis by activating oncogenes or inactivating tumor suppressor genes. Genomic instability, a hallmark of cancer, generates characteristic mutational patterns that can be leveraged for early detection.

Core Technologies and Workflows:

- Next-Generation Sequencing (NGS): Comprehensive genomic profiling utilizes whole exome sequencing (WES) and whole genome sequencing (WGS) to identify cancer-associated genetic variations across the genome [25]. The typical workflow involves DNA extraction, library preparation, sequencing, and bioinformatic analysis for variant calling.

- Tumor Mutational Burden (TMB) Calculation: TMB, defined as the total number of nonsynonymous mutations per megabase of genome sequenced, has emerged as a predictive biomarker for immunotherapy response. The KEYNOTE-158 trial validated TMB as a biomarker for pembrolizumab treatment across solid tumors, leading to FDA approval [25].

- Liquid Biopsy for Circulating Tumor DNA (ctDNA): This minimally invasive approach detects tumor-derived DNA fragments in blood plasma. Challenges include the low abundance and high fragmentation of ctDNA, particularly in early-stage disease [26]. Digital PCR (dPCR) and targeted NGS panels enable highly sensitive detection of known mutations, while error-corrected NGS methods facilitate discovery of novel variants.

Table 1: Key Genomic Biomarkers in Early Cancer Detection

| Biomarker | Cancer Type | Detection Method | Clinical Utility |

|---|---|---|---|

| KRAS mutations | Colorectal, Pancreatic | NGS, dPCR | Predicts resistance to EGFR inhibitors [23] |

| EGFR mutations | Non-Small Cell Lung Cancer (NSCLC) | NGS, dPCR | Predicts response to EGFR tyrosine kinase inhibitors [23] |

| Tumor Mutational Burden (TMB) | Multiple solid tumors | NGS | Predictive biomarker for immunotherapy response [25] |

| BRCA1/2 mutations | Breast, Ovarian | NGS, Sanger sequencing | Hereditary risk assessment and PARP inhibitor response [23] |

| ctDNA quantification | Pan-cancer | dPCR, NGS | Monitoring treatment response and minimal residual disease [26] |

Epigenetic Biomarkers

Epigenetic modifications regulate gene expression without altering the DNA sequence. DNA methylation, the most studied epigenetic marker, involves addition of methyl groups to cytosine residues in CpG dinucleotides [27]. In cancer, global hypomethylation coincides with locus-specific hypermethylation of CpG islands in promoter regions, leading to genomic instability and silencing of tumor suppressor genes [27] [28]. These alterations often emerge early in tumorigenesis, making them ideal biomarkers for early detection [28].

DNA Methylation Analysis Workflow:

- Bisulfite Conversion: Treatment with sodium bisulfite deaminates unmethylated cytosines to uracils, while methylated cytosines remain unchanged, enabling methylation-specific detection.

- Library Preparation: For NGS-based methods, bisulfite-converted DNA undergoes library preparation with adapters compatible with sequencing platforms.

- Sequencing & Analysis: Bisulfite sequencing, microarrays, or targeted approaches generate methylation data, with bioinformatic pipelines determining methylation status at single-base resolution.

Advanced Detection Methods:

- Bisulfite-Free Sequencing: Emerging techniques like enzymatic methylation sequencing (EM-seq) and Tet-assisted pyridine borane sequencing (TAPS) preserve DNA integrity and minimize artifacts associated with bisulfite conversion [28].

- Third-Generation Sequencing: Single-Molecule Real-Time (SMRT) sequencing (PacBio) and nanopore sequencing (Oxford Nanopore Technologies) enable direct detection of DNA modifications without chemical pretreatment, providing long-read capabilities for analyzing fragmented ctDNA [28].

Table 2: DNA Methylation Detection Technologies

| Technology | Principle | Resolution | Throughput | Best Application |

|---|---|---|---|---|

| Whole-Genome Bisulfite Sequencing (WGBS) | Bisulfite conversion + NGS | Single-base | High | Comprehensive discovery [26] [28] |

| Reduced Representation Bisulfite Sequencing (RRBS) | Enzymatic digestion + bisulfite conversion | CpG-rich regions | Medium | Cost-effective profiling [28] |

| Methylation-Specific PCR (MSP) | Bisulfite conversion + methylation-specific primers | Locus-specific | Low | Targeted validation [28] |

| Illumina Methylation BeadChip | Array-based hybridization | 930,000 CpG sites | High | Population studies [28] |

| Enzymatic Methylation Sequencing (EM-seq) | Enzymatic conversion + NGS | Single-base | High | Preservation of DNA integrity [26] [28] |

Transcriptomic Biomarkers

Transcriptomics investigates the complete set of RNA transcripts, including messenger RNA (mRNA), microRNA (miRNA), long non-coding RNA (lncRNA), and other non-coding RNAs [25]. Gene expression signatures provide dynamic information about cellular states and tumor heterogeneity, reflecting both genetic and environmental influences.

Profiling Technologies:

- RNA Sequencing (RNA-Seq): This high-sensitivity approach provides comprehensive transcriptome profiling, enabling discovery of novel splice variants, fusion genes, and non-coding RNAs. The standard protocol includes RNA extraction, library preparation with poly-A selection or ribosomal RNA depletion, sequencing, and differential expression analysis.

- Single-Cell RNA Sequencing (scRNA-Seq): This revolutionary technology resolves cellular heterogeneity within tumors by profiling gene expression at individual cell level, identifying rare cell populations and tumor microenvironment dynamics [25].

- Multi-Analyte Algorithms: Machine learning pipelines applied to transcriptomic data can identify minimal gene panels for cancer classification. For breast cancer, eight-gene panels selected through LASSO regularization achieve F1 Macro scores ≥80% for classifying non-malignant, non-triple-negative, and triple-negative subtypes [29].

Clinically Validated Applications:

- Oncotype DX: A 21-gene expression assay validated in the TAILORx trial to guide adjuvant chemotherapy decisions in hormone receptor-positive breast cancer [25].

- MammaPrint: A 70-gene signature assessed in the MINDACT trial for prognostic stratification in early-stage breast cancer [25].

Proteomic Biomarkers

Proteomics characterizes the entire complement of proteins, including their abundances, post-translational modifications (PTMs), and interactions [25]. As functional effectors of biological processes, proteins most directly reflect cellular phenotype and drug target engagement, making them invaluable biomarkers.

Analytical Platforms:

- Mass Spectrometry (MS): Liquid chromatography-tandem MS (LC-MS/MS) enables high-throughput protein identification and quantification. Data-independent acquisition (DIA) methods like SWATH-MS provide comprehensive proteome coverage with high reproducibility.

- Proximity Extension Assays (PEA): Platforms like Olink utilize antibody-based pairs with DNA tags that upon target binding create amplifiable sequences for highly multiplexed, sensitive protein quantification in minimal sample volumes.

- Reverse Phase Protein Arrays (RPPA): This high-throughput antibody-based method enables targeted quantification of specific proteins and their post-translational modifications across large sample cohorts.

Protein Biosensor Development:

- Transcriptomics-Guided Discovery: Machine learning analysis of transcriptomic data identifies highly expressed transmembrane proteins ideal for biosensor development. For breast cancer, biomarkers like ERBB2, MME, and ESR1 show strong predictive power for 5-year survival and are amenable to surface capture on diagnostic devices [29].

- Nanoparticle-Enhanced Detection: Engineered nanoparticles functionalized with specific binding molecules (antibodies, aptamers) enhance sensitivity for low-abundance protein biomarkers in liquid biopsies [23].

Table 3: Proteomic Biomarkers and Detection Technologies

| Biomarker | Cancer Type | Detection Technology | Clinical Utility |

|---|---|---|---|

| PSA | Prostate Cancer | Immunoassay | Screening and monitoring [23] |

| CA-125 | Ovarian Cancer | Immunoassay | Monitoring therapy response [23] |

| HER2/ER/PR | Breast Cancer | IHC, FISH | Treatment selection [23] |

| Multi-protein panels | Multiple cancers | Mass spectrometry, Multiplex immunoassays | Early detection (e.g., CancerSEEK) [23] [27] |

| PD-L1 | NSCLC, Melanoma | IHC | Predicts response to immune checkpoint inhibitors [23] |

Integrated Multi-Omics Approaches

The integration of multiple omics layers provides a more comprehensive understanding of cancer biology than any single approach. Multi-omics strategies can be categorized as horizontal integration (analyzing the same omics data type across different samples or conditions) or vertical integration (combining different omics data types from the same samples) [25].

Computational Integration Methods:

- AI-Driven Integration: Machine learning and deep learning algorithms effectively integrate diverse omics data types. Graph-based models and convolutional neural networks (CNNs) identify complex patterns across genomics, epigenomics, transcriptomics, and proteomics data [27] [25].

- Multi-Omics Databases: Public resources like The Cancer Genome Atlas (TCGA), Pan-Cancer Analysis of Whole Genomes (PCAWG), Clinical Proteomic Tumor Analysis Consortium (CPTAC), and DriverDBv4 provide curated multi-omics datasets for biomarker discovery and validation [25].

Clinical Applications:

- Multi-Cancer Early Detection (MCED) Tests: Assays like GRAIL's Galleri test utilize targeted methylation sequencing of ctDNA combined with machine learning to detect over 50 cancer types and predict tissue of origin [23] [27].

- CancerSEEK: This blood test integrates mutations in 16 genes with circulating protein biomarkers for detecting eight common cancer types [23] [27].

Multi-Omics Integration Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Essential Research Reagents for Biomarker Discovery

| Reagent/Material | Function | Application Examples |

|---|---|---|

| Cell-free DNA Blood Collection Tubes | Preserves ctDNA by inhibiting nucleases | Liquid biopsy studies; stabilizing blood samples for transport [26] |

| Bisulfite Conversion Kits | Chemical conversion of unmethylated cytosine to uracil | DNA methylation analysis (MSP, WGBS, arrays) [28] |

| Methylated DNA Immunoprecipitation (MeDIP) Kits | Antibody-based enrichment of methylated DNA | Methylome profiling without bisulfite conversion [26] |

| Next-Generation Sequencing Library Prep Kits | Preparation of DNA/RNA libraries for sequencing | Whole genome, exome, transcriptome, methylome sequencing [25] [28] |

| Multiplex Immunoassay Panels | Simultaneous quantification of multiple proteins | Validation of protein biomarker panels; verification of transcriptomic findings [30] |

| Single-Cell Isolation Kits | Isolation of individual cells for omics analysis | Single-cell RNA sequencing; tumor heterogeneity studies [25] |

| Mass Spectrometry Grade Trypsin | Protein digestion for mass spectrometry analysis | Bottom-up proteomics; PTM characterization [25] |

| CRISPR-Based Modification Tools | Targeted epigenetic or genetic modification | Functional validation of biomarker candidates [27] |

The convergence of genomic, epigenetic, transcriptomic, and proteomic biomarker technologies represents a paradigm shift in early cancer detection. While each biomarker class provides unique biological insights, their integration through multi-omics approaches and AI-powered analytics offers the most promising path toward comprehensive cancer diagnostics. DNA methylation biomarkers, particularly when detected in liquid biopsies, show exceptional promise due to their early emergence in tumorigenesis and technical stability [26] [28]. Transcriptomic and proteomic profiling provide functional validation of genetic and epigenetic findings, enabling development of clinically actionable biomarker panels.

Significant challenges remain in standardizing analytical protocols, validating biomarkers across diverse populations, and demonstrating clinical utility in prospective trials. Furthermore, as recent studies indicate, translational implementation faces practical barriers, with only approximately one-third of advanced cancer patients receiving recommended biomarker testing despite established guidelines [31]. Future research must prioritize the development of cost-effective, accessible technologies that can equitably deliver on the promise of precision oncology. Through continued innovation in multi-omics integration and AI-driven biomarker discovery, these molecular tools will increasingly enable detection of cancer at its most treatable stages, ultimately transforming cancer care outcomes globally.

Biomarker Discovery & Clinical Translation Pipeline

The Limitations of Traditional Biomarkers and the Need for Innovation

Cancer biomarkers are biological molecules—such as proteins, genes, or metabolites—that can be objectively measured to indicate the presence, progression, or behavior of cancer. These markers are indispensable in modern oncology, playing pivotal roles in early detection, diagnosis, treatment selection, and monitoring of therapeutic responses [23] [32]. As cancer continues to be a leading cause of mortality worldwide—with an estimated 20 million new cases and 9.7 million deaths in 2022 alone—the development and application of biomarkers have become essential for improving patient outcomes and advancing precision medicine [23]. The importance of biomarkers lies in their ability to provide actionable insights into a disease that is notoriously complex and heterogeneous. From screening asymptomatic populations to tailoring therapies to individual patients, biomarkers are bridging the gap between basic research and clinical practice [23].

Despite their established role in oncology, traditional biomarkers face significant limitations that reduce their clinical utility, particularly for early detection. This whitepaper examines the technical shortcomings of established biomarkers, explores emerging innovative technologies and approaches that are addressing these limitations, and provides detailed experimental methodologies for researchers working at the forefront of cancer biomarker discovery. As the field undergoes a technological renaissance driven by breakthroughs in multi-omics, spatial biology, artificial intelligence (AI), and high-throughput analytics [33], understanding both the constraints of conventional approaches and the promise of emerging innovations becomes crucial for advancing cancer detection and personalized treatment paradigms.

Limitations of Traditional Cancer Biomarkers

Fundamental Technical and Biological Constraints

Traditional cancer biomarkers, including prostate-specific antigen (PSA), cancer antigen 125 (CA-125), carcinoembryonic antigen (CEA), and cancer antigen 19-9 (CA 19-9), exhibit critical limitations that impact their diagnostic and prognostic performance. These constraints primarily revolve around insufficient sensitivity and specificity, biological variability, and late emergence in disease progression [23] [32].

The deficiency in sensitivity and specificity presents the most significant challenge for early detection. For example, PSA levels can rise due to benign conditions like prostatitis or benign prostatic hyperplasia, leading to false positives and unnecessary invasive procedures [23]. Similarly, CA-125 is not exclusive to ovarian cancer and can be elevated in other cancers or non-malignant conditions, such as endometriosis [23]. This lack of specificity necessitates careful interpretation of results and often requires further investigation, increasing healthcare costs and patient anxiety.

A fundamental biological limitation of many established biomarkers is that they frequently do not emerge until the cancer is already advanced, substantially reducing their value in early detection when intervention is most effective [23]. The inability to detect molecular changes during the initial stages of carcinogenesis represents a critical gap in cancer screening capabilities. Additionally, single-biomarker approaches often fail to capture the complex heterogeneity of cancer, leading to incomplete biological characterization and limited clinical utility [23] [33].

Clinical and Statistical Challenges in Application

The technical limitations of traditional biomarkers translate directly into substantial clinical challenges, including overdiagnosis, overtreatment, and statistical artifacts that complicate the interpretation of screening benefits [34].

The consequences of these limitations are staggering in both human and economic terms. In 2021 alone, according to one estimate, the United States spent more than forty billion dollars on cancer screening [34]. On average, a year's worth of screenings yields nine million positive results—of which 8.8 million are false positives [34]. This means millions of patients endure follow-up scans, biopsies, and associated anxiety so that just over two hundred thousand true positives can be found, of which an even smaller fraction can be cured by local treatment like excision.

Statistical distortions further complicate the assessment of screening effectiveness. Lead-time bias creates the illusion of extended survival without actually prolonging life. This occurs when screening detects cancer earlier in the disease course, thereby increasing the measured time between diagnosis and death without affecting the actual time of death [34]. Overdiagnosis bias arises when screening disproportionately detects indolent, slow-growing tumors that would never have become clinically significant during a patient's lifetime [34]. These statistical artifacts can misleadingly inflate the perceived benefits of screening programs based on traditional biomarkers.

Table 1: Limitations of Established Traditional Cancer Biomarkers

| Biomarker | Associated Cancer | Key Limitations | Clinical Consequences |

|---|---|---|---|

| PSA (Prostate-Specific Antigen) | Prostate | Elevated in benign conditions (prostatitis, BPH); Poor specificity [23] | Unnecessary biopsies, patient anxiety, overtreatment |

| CA-125 (Cancer Antigen 125) | Ovarian | Elevated in other cancers and non-malignant conditions (endometriosis) [23] | False positives, unnecessary invasive procedures |

| CEA (Carcinoembryonic Antigen) | Colorectal, Liver | Limited sensitivity for early-stage disease; Can be elevated in non-cancer conditions [1] | Limited utility for early detection; False positives |

| CA 19-9 (Cancer Antigen 19-9) | Pancreatic, Colon | Limited sensitivity for early disease; Elevated in benign gastrointestinal conditions [1] | Poor early detection capability; False positives |

| AFP (Alpha-fetoprotein) | Liver (HCC) | 30% of hepatocellular carcinomas show no AFP elevation [35] | Missed diagnoses if used as sole biomarker |

Emerging Biomarkers and Innovative Approaches

Novel Biomarker Classes and Their Advantages

Emerging biomarker classes are overcoming the limitations of traditional approaches by leveraging molecular characteristics that reflect the fundamental biology of cancer development and progression. These innovative biomarkers include circulating tumor DNA (ctDNA), circulating tumor cells (CTCs), microRNAs (miRNAs), exosomes, and various epigenetic markers [23] [1].

Circulating tumor DNA (ctDNA) represents fragments of DNA shed by tumor cells into the bloodstream. Unlike traditional protein biomarkers, ctDNA carries tumor-specific genetic and epigenetic alterations, offering higher cancer specificity [23] [35]. ctDNA analysis can detect mutations in genes like KRAS, EGFR, and TP53 at the preclinical stages, providing a window for intervention before symptoms appear [23]. Additionally, ctDNA levels can be quantified to monitor tumor burden and treatment response, enabling dynamic assessment of disease progression [35].

Circulating tumor cells (CTCs) are intact cancer cells that have detached from the primary tumor and entered the circulation. These cells serve as valuable biomarkers for assessing metastatic potential and studying the biological characteristics of tumors through functional analyses and single-cell sequencing [23]. The enumeration and molecular characterization of CTCs provide insights into cancer biology that are complementary to ctDNA analyses.

Exosomes and other extracellular vesicles (EVs) are membrane-bound nanoparticles released by cells that contain proteins, nucleic acids, and metabolites from their cell of origin. Tumor-derived exosomes carry molecular information reflective of their parental cells and play important roles in cell-cell communication within the tumor microenvironment [23] [1]. The stability of exosomes in circulation and their molecular complexity make them promising biomarker sources.

MicroRNAs (miRNAs) are small non-coding RNAs that regulate gene expression and are frequently dysregulated in cancer. Their stability in bodily fluids, resistance to degradation, and cancer-specific expression patterns make them attractive biomarker candidates [1]. miRNA signatures can distinguish cancer types and provide prognostic information beyond conventional markers.

Technological Innovations in Biomarker Analysis

Revolutionary technologies are transforming how biomarkers are detected, analyzed, and implemented in clinical practice. These innovations address the limitations of traditional biomarker approaches through enhanced sensitivity, multiplexing capabilities, and computational integration.

Liquid biopsy represents a paradigm shift in cancer detection by enabling non-invasive sampling and analysis of tumor-derived materials from blood or other bodily fluids [23] [1]. This approach eliminates the need for invasive tissue biopsies, allows for real-time monitoring of treatment responses, and facilitates the detection of cancers that are difficult to access through conventional methods. Liquid biopsies are particularly valuable for capturing tumor heterogeneity, as they sample multiple tumor sites simultaneously [35].

Multi-omics integration combines data from genomic, epigenomic, transcriptomic, proteomic, and metabolomic analyses to provide a comprehensive view of cancer biology [23] [33]. This approach recognizes that cancer cannot be fully characterized by any single molecular dimension and that integrating multiple data types reveals emergent biological insights. Multi-analyte tests like CancerSEEK combine DNA mutations, methylation profiles, and protein biomarkers to detect multiple cancer types simultaneously with encouraging sensitivity and specificity [23].

Artificial intelligence (AI) and machine learning (ML) are revolutionizing biomarker discovery and application by identifying subtle patterns in complex datasets that human observers might miss [23] [33]. AI/ML algorithms integrate and analyze various molecular data types with imaging to enhance diagnostic accuracy and therapy recommendations. These technologies are particularly powerful for predicting treatment responses, recurrence risk, and patient outcomes based on multimodal data [33].

Spatial biology techniques, including spatial transcriptomics and multiplex immunohistochemistry, allow researchers to study biomarker expression within the tissue architecture without disrupting spatial relationships [33]. This preservation of spatial context is crucial for understanding the tumor microenvironment, cellular interactions, and heterogeneity—factors that significantly influence cancer behavior and treatment response.

Table 2: Emerging Biomarker Classes and Their Clinical Applications

| Biomarker Class | Molecular Components | Key Advantages | Current Applications |

|---|---|---|---|

| Circulating Tumor DNA (ctDNA) | Tumor-derived DNA fragments with genetic/epigenetic alterations [23] [35] | High specificity; Non-invasive; Allows monitoring of tumor dynamics; Early detection potential | Treatment response monitoring; Minimal residual disease detection; Early cancer detection [23] [35] |

| Circulating Tumor Cells (CTCs) | Intact tumor cells in circulation [23] | Provides living cells for functional studies; Assess metastatic potential | Prognostic assessment; Drug sensitivity testing [23] |

| Exosomes/Extracellular Vesicles | Proteins, nucleic acids, metabolites from parent cells [23] [1] | Molecular complexity; Stability in circulation; Cell-cell communication insights | Biomarker discovery; Understanding tumor microenvironment [23] [1] |

| MicroRNAs (miRNAs) | Small non-coding RNAs [1] | Stability in bodily fluids; Disease-specific signatures; Regulatory roles | Diagnostic and prognostic signatures for multiple cancers [1] |

| Multi-cancer Early Detection (MCED) Panels | Combined ctDNA mutations, methylation, protein biomarkers [23] | Detects multiple cancer types simultaneously; Identifies tissue of origin | Population screening (e.g., Galleri test); Risk stratification [23] |

Experimental Protocols and Methodologies

Liquid Biopsy and ctDNA Analysis Workflow

The analysis of ctDNA from liquid biopsies requires highly sensitive and standardized methodologies to detect the rare tumor-derived fragments amidst the abundant background of normal cell-free DNA. The following protocol outlines the key steps in ctDNA analysis for early cancer detection applications.

Sample Collection and Processing: Collect whole blood (typically 10-20 mL) in Streck Cell-Free DNA BCT or similar specialized collection tubes that preserve cell-free DNA and prevent genomic DNA contamination from white blood cell lysis [35]. Process samples within 6 hours of collection by double centrifugation (e.g., 1600 × g for 10 minutes followed by 16,000 × g for 10 minutes) to obtain platelet-poor plasma. Store plasma at -80°C until DNA extraction.

Cell-free DNA Extraction: Extract cfDNA from plasma (typically 2-5 mL) using commercially available silica membrane-based kits or magnetic bead technologies. Automated extraction systems are preferred for consistency and throughput. Quantify extracted cfDNA using fluorometric methods (e.g., Qubit) and assess fragment size distribution using bioanalyzer systems to confirm the characteristic ~167 bp nucleosomal fragmentation pattern.

Library Preparation and Target Enrichment: Prepare sequencing libraries from 10-100 ng of cfDNA using kits specifically optimized for low-input and degraded DNA. For mutation-based detection, hybrid capture or amplicon-based target enrichment approaches are used to focus sequencing on cancer-relevant genomic regions. Pan-cancer panels typically include genes frequently mutated across multiple cancer types (e.g., TP53, KRAS, EGFR, PIK3CA) [35].

For methylation-based analyses, treat DNA with bisulfite to convert unmethylated cytosine residues to uracil while leaving methylated cytosines unchanged. Alternatively, use enzymatic conversion methods that reduce DNA damage [35]. Subsequently, perform targeted sequencing of cancer-specific methylation markers or genome-wide methylation profiling.

Next-generation Sequencing and Data Analysis: Sequence libraries on high-throughput sequencing platforms (e.g., Illumina NovaSeq, PacBio Sequel) to achieve sufficient coverage (typically 10,000-50,000×) for detecting low-frequency variants. For fragmentomics approaches, analyze cfDNA fragmentation patterns, including fragment size distribution, end motifs, and nucleosomal positioning [35].

Bioinformatic processing includes: (1) adapter trimming and quality control; (2) alignment to reference genome; (3) duplicate removal; (4) variant calling using specialized algorithms optimized for low variant allele frequencies; (5) methylation state analysis for bisulfite sequencing data; and (6) machine learning-based classification to distinguish cancer from non-cancer samples and predict tissue of origin [35].

Figure 1: Liquid Biopsy and ctDNA Analysis Workflow. This diagram illustrates the key steps in processing liquid biopsy samples for circulating tumor DNA analysis, from blood collection through bioinformatic interpretation.

Multi-omics Integration Protocol

Integrating multiple molecular data types provides a comprehensive view of cancer biology that surpasses the limitations of single-analyte approaches. The following protocol outlines a standardized workflow for multi-omics biomarker discovery and validation.

Sample Preparation and Multi-omics Data Generation: Process matched tumor tissue, adjacent normal tissue, and blood samples from the same patient. For each sample type, isolate: (1) DNA for whole-genome or whole-exome sequencing to identify somatic mutations, copy number alterations, and structural variants; (2) RNA for transcriptome sequencing (RNA-seq) to quantify gene expression, alternative splicing, and fusion genes; (3) protein lysates for proteomic analysis using mass spectrometry or multiplex immunoassays; and (4) metabolites for metabolomic profiling using LC-MS or GC-MS platforms.

Data Preprocessing and Quality Control: Perform platform-specific quality control for each data type. For genomic data: assess sequencing depth, coverage uniformity, and base quality scores. For transcriptomic data: evaluate RNA integrity, library complexity, and gene body coverage. For proteomic data: monitor peptide identification rates, mass accuracy, and reproducibility. For metabolomic data: assess peak detection, retention time stability, and internal standard recovery.

Multi-omics Data Integration and Analysis: Employ computational frameworks to integrate the multi-dimensional data. Common approaches include: (1) Concatenation-based integration: merging features from different omics layers into a unified matrix for downstream analysis; (2) Transformation-based methods: using dimensionality reduction techniques (e.g., Multi-Omics Factor Analysis) to identify shared latent factors across data types; (3) Model-based integration: employing Bayesian networks or kernel methods to model relationships between different molecular layers; (4) Network-based approaches: constructing molecular interaction networks that incorporate genomic, transcriptomic, and proteomic data.

Biomarker Signature Development and Validation: Apply machine learning algorithms (e.g., random forests, support vector machines, neural networks) to identify multi-omics patterns predictive of diagnosis, prognosis, or treatment response [33]. Use cross-validation and independent cohort testing to assess signature performance. Compare multi-omics signatures against single-omics biomarkers to demonstrate added clinical value.